Understanding the calculation of the kappa statistic: A measure of inter-observer reliability | Semantic Scholar

The kappa coefficient of agreement. This equation measures the fraction... | Download Scientific Diagram

Understanding the calculation of the kappa statistic: A measure of inter-observer reliability Mishra SS, Nitika - Int J Acad Med

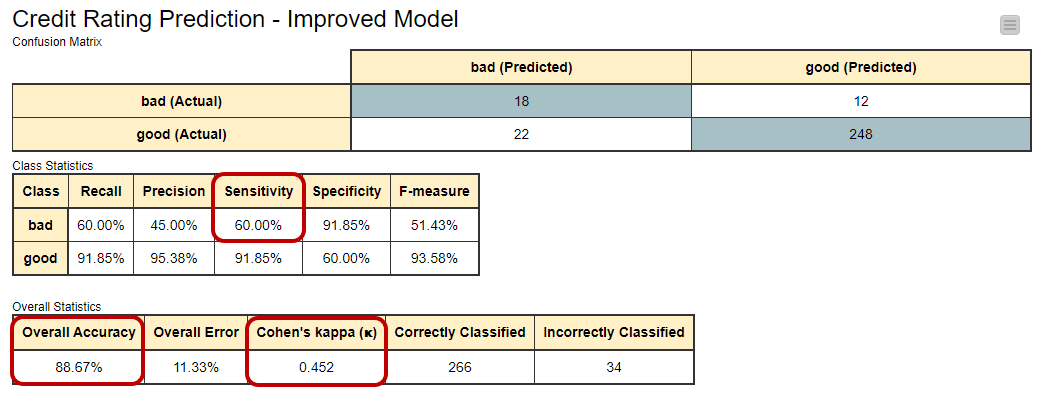

Kappa Coefficient for Dummies. How to measure the agreement between… | by Aditya Kumar | AI Graduate | Medium

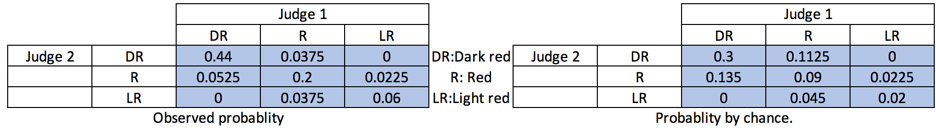

![PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/79de97d630ca1ed5b1b529d107b8bb005b2a066b/1-Figure1-1.png)

PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table2-1.png)

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table3-1.png)

![PDF] Interrater reliability: the kappa statistic | Semantic Scholar PDF] Interrater reliability: the kappa statistic | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/bf3a7271860b1667e3ceb84e5bc400d2635ff8b7/5-Figure3-1.png)